Kryptonite for Apache Kafka

Client-Side Field Level Cryptography (CSFLC) for Data Streaming Workloads

Encrypt and decrypt payload fields end-to-end before sensitive data ever reaches the Kafka brokers.

Seamless integrations with and across Apache Kafka Connect, Apache Flink, ksqlDB, a standalone Quarkus HTTP service, and a dedicated Kroxylicious filter.

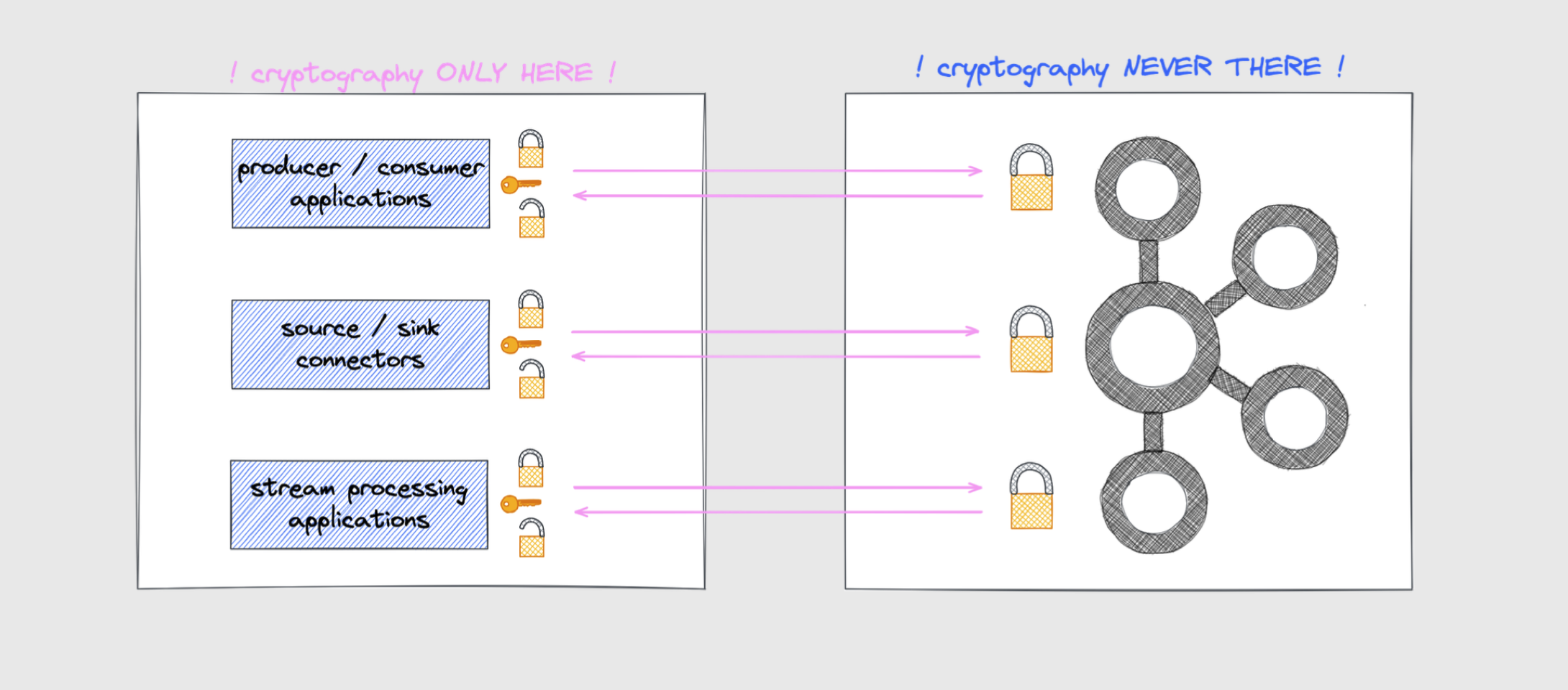

Client-Side Cryptography means all cryptographic operations are guaranteed to only ever happen on the client-side.

Why Kryptonite for Apache Kafka?

-

True Client-Side Encryption

Sensitive plaintext never leaves the client-side application.

Neither Kafka brokers nor any intermediary gateway or reverse proxy infrastructure ever gets to see sensitive payload fields in plaintext. Encryption / Decryption of data happens only within the security perimeter of the client application.

-

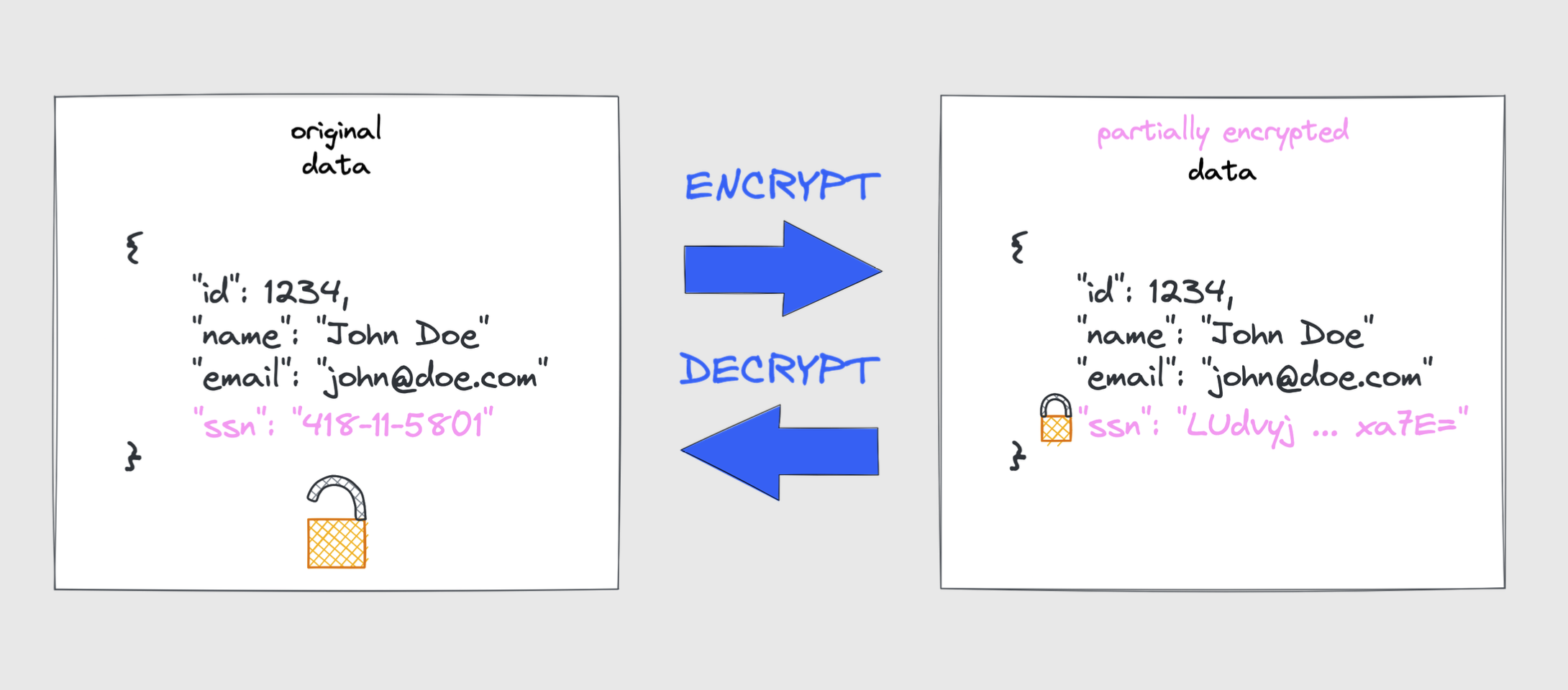

Field-Level Scope

Configure which payload fields need this extra level of data protection.

It's not an all or nothing approach. Need to encrypt just one field, a handful of fields, or maybe all payload fields? Everything else stays untouched and is still directly processable by any downstream applications.

-

Flexible Key Management

You decide how to manage cryptographic keys.

Quickly need to inline keysets for development? Need to store keysets in a cloud key management systems (KMS)? Want to encrypt keysets with a key encryption key (KEK) stored in a cloud provider's KMS? GCP Cloud KMS, AWS KMS, and Azure Key Vault are supported - the choice is yours!

-

Ready-Made Integrations

Apache Kafka Connect SMTs, Apache Flink UDFs, ksqlDB UDFs, a Quarkus Funqy HTTP service, and a dedicated Kroxylicious filter. You don't need custom serializers or custom code because flexible configuration options drive the encrypt/decrypt capabilities.

-

Versatile Encryption Capabilities

By default, all modules apply probabilistic encryption using AES GCM 256-bit encryption. If your use case requires deterministic encryption, you can choose AES GCM SIV. To support format-preserving encryption, FF3-1 is currently available.

-

Built on top of Google Tink

Cryptographic primitives come directly from Tink. It's a vetted and widely deployed open-source cryptography library implemented and maintained by cryptographers and security engineers at Google. If you want to learn more read What is Tink?

Five Integration Modules

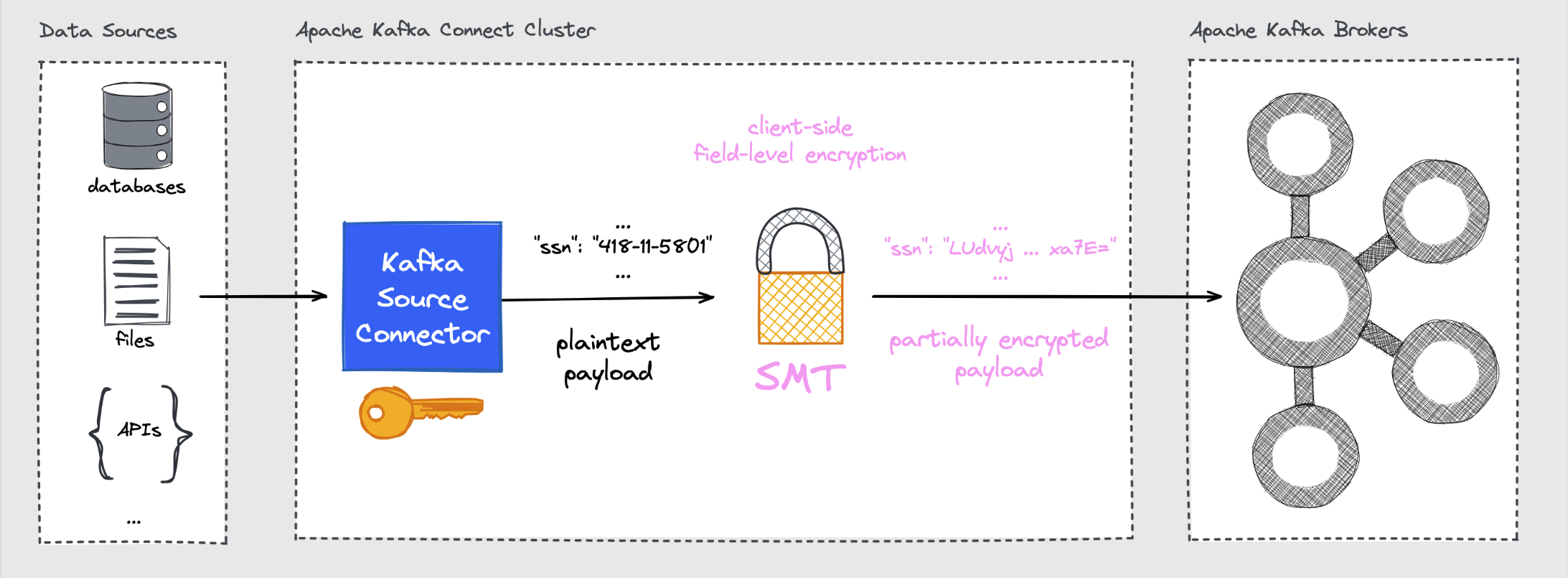

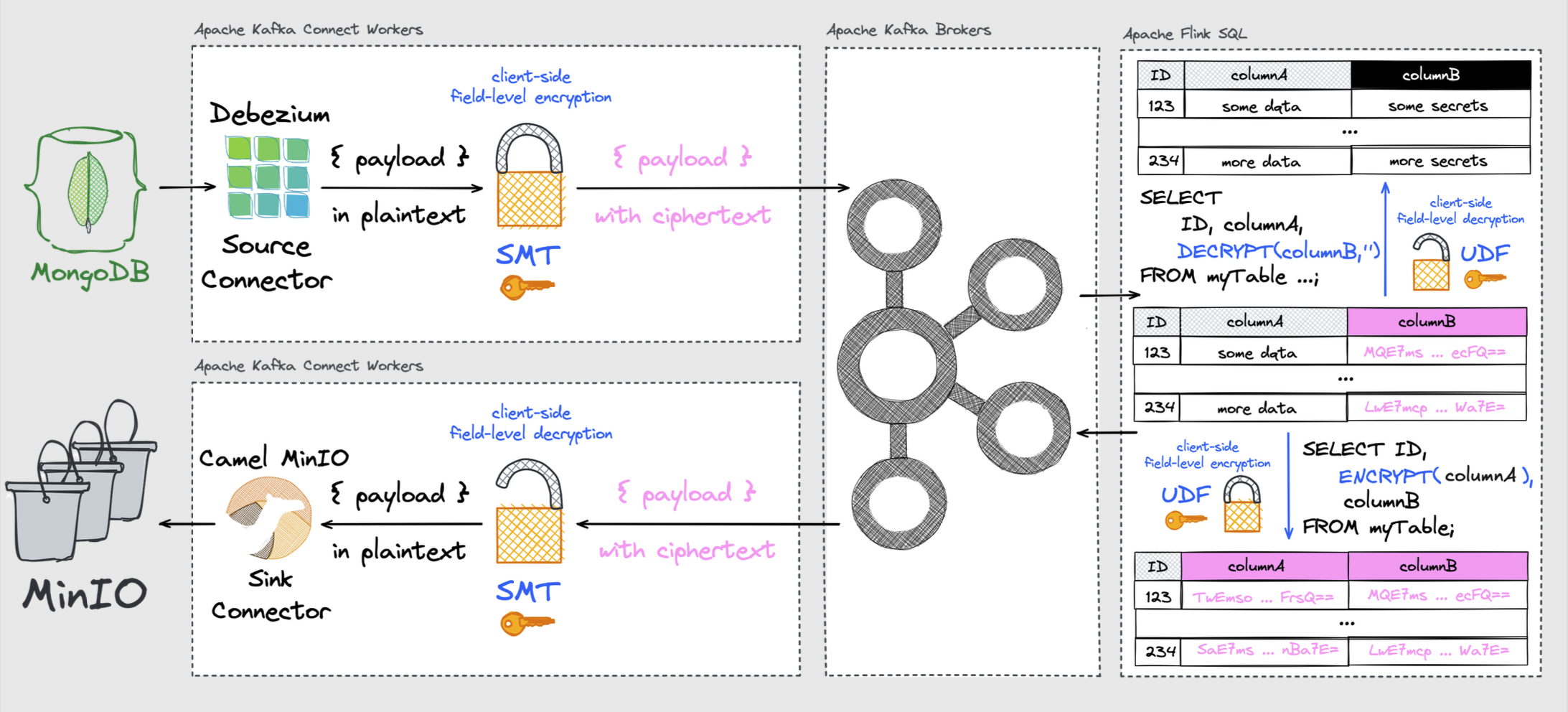

Apache Kafka Connect SMT

Field-Level Encryption with Source Connectors

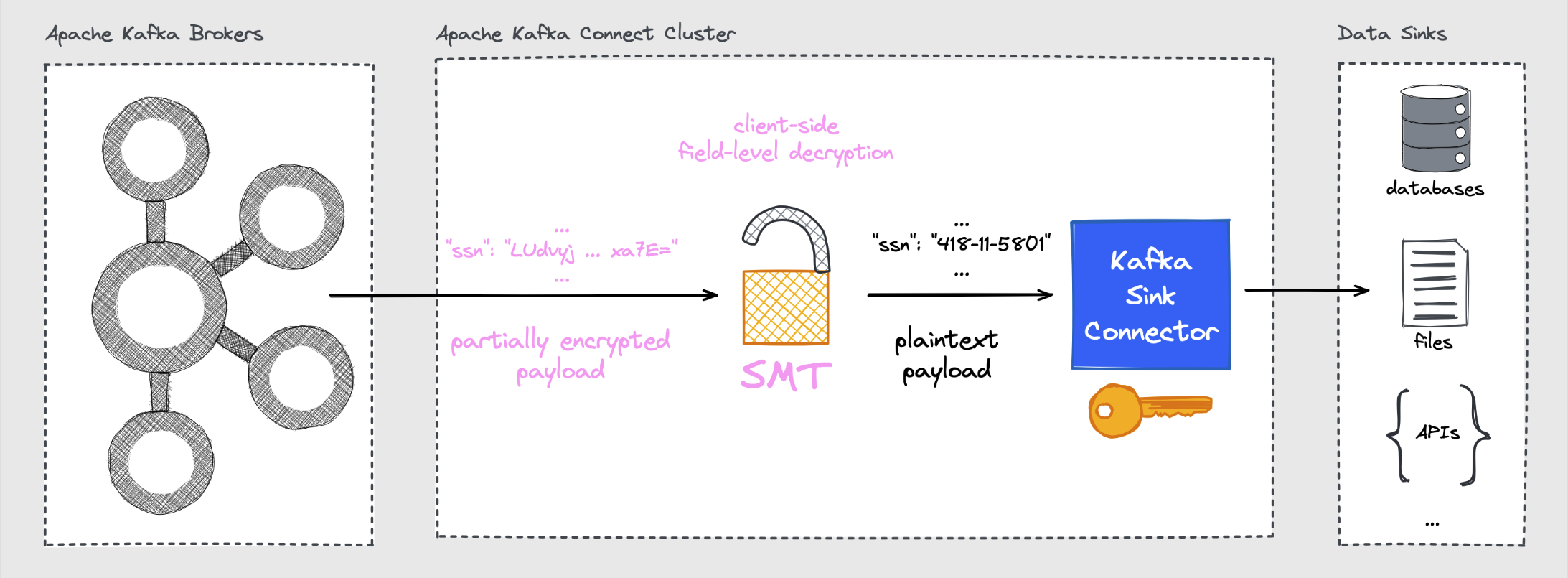

Field-Level Decryption with Sink Connectors

The CipherField Single Message Transformation (SMT) encrypts or decrypts payload fields in combination with pretty much any source or sink connector available for Kafka Connect. The SMT works with schemaless JSON and common schema-aware formats (Avro, Protobuf, JSON Schema).

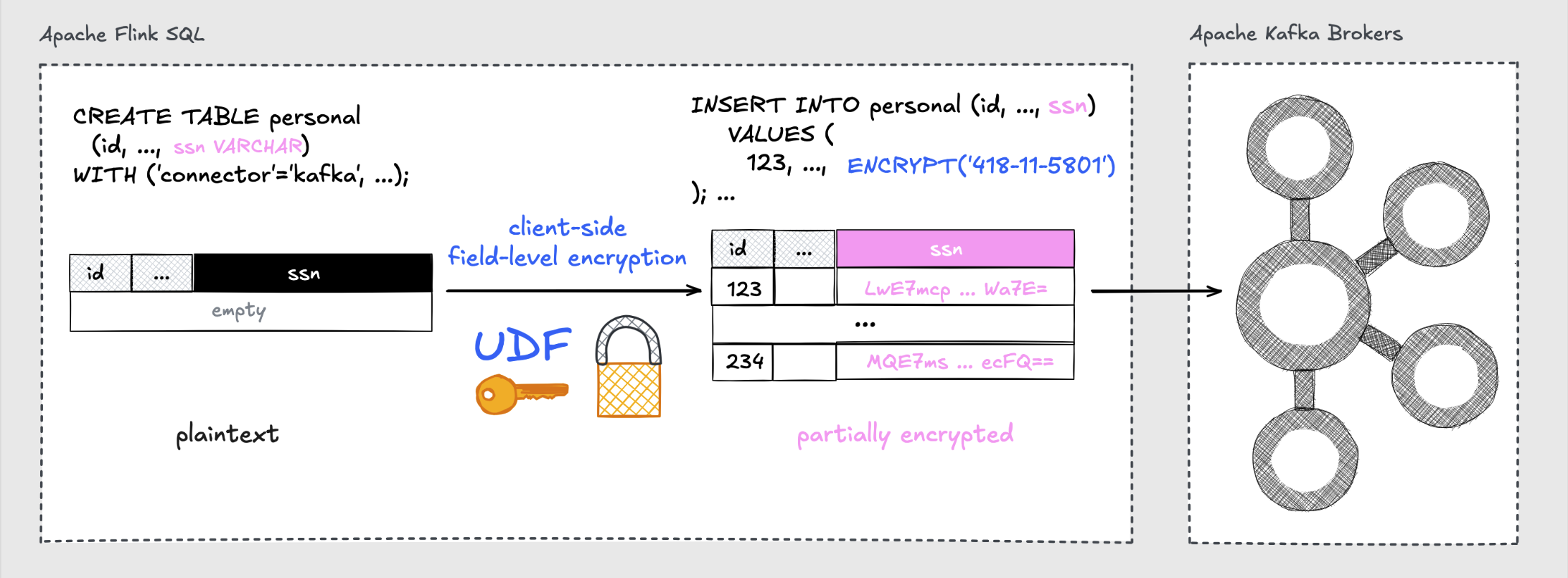

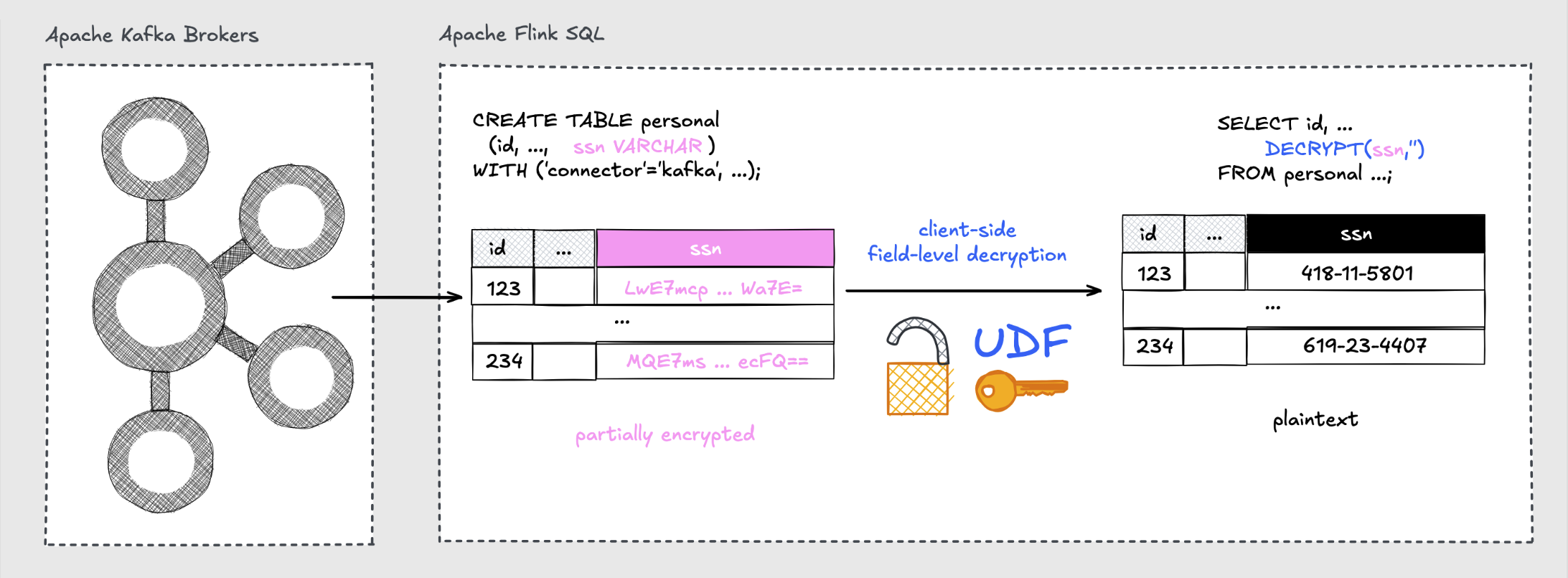

Apache Flink UDFs

Field-Level Encryption with UDFs in Flink SQL

Field-Level Decryption with UDFs in Flink SQL

A set of user-defined functions (K4K_ENCRYPT_*/K4K_DECRYPT_*) can be applied in Flink Table API or Flink SQL jobs. The UDFs can process both primitive and complex Flink Table API data types.

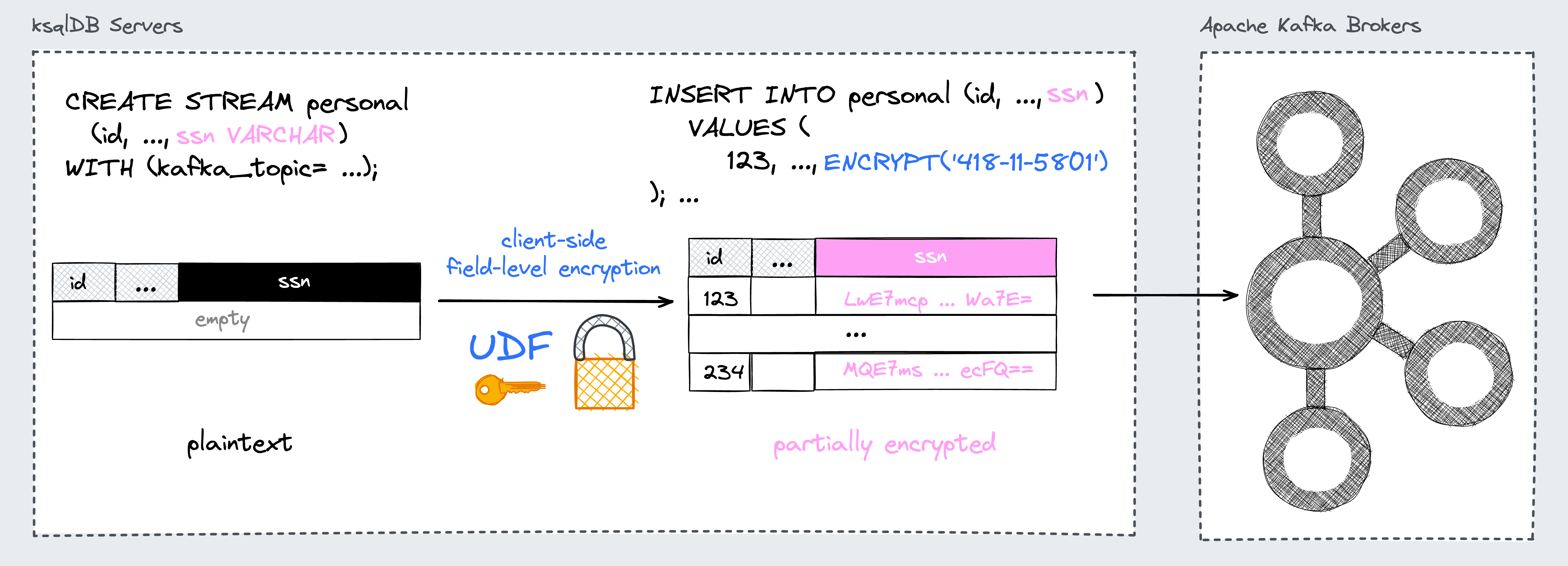

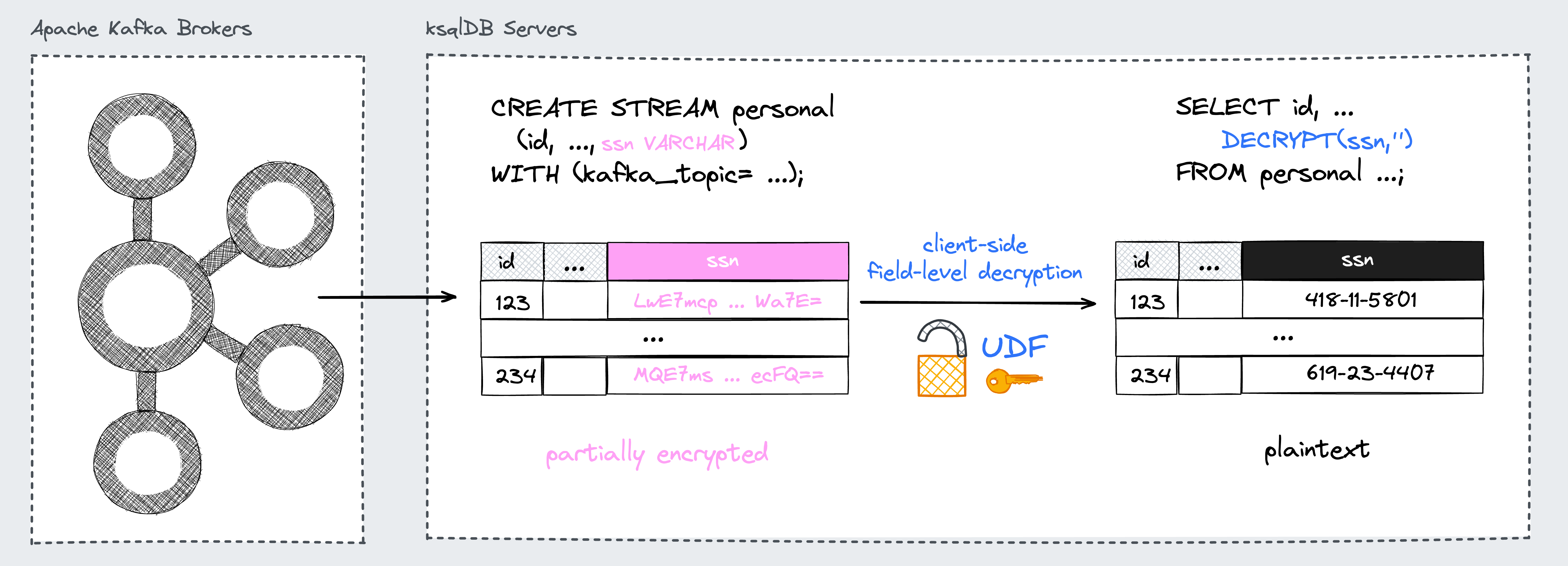

ksqlDB UDFs

Field-Level Encryption with UDFs in ksqlDB

Field-Level Decryption with UDFs in ksqlDB

A set of user-defined functions (K4K_ENCRYPT*/K4K_DECRYPT*) can be applied in ksqlDB STREAM or TABLE queries. The UDFs can process both primitive and complex ksqlDB data types.

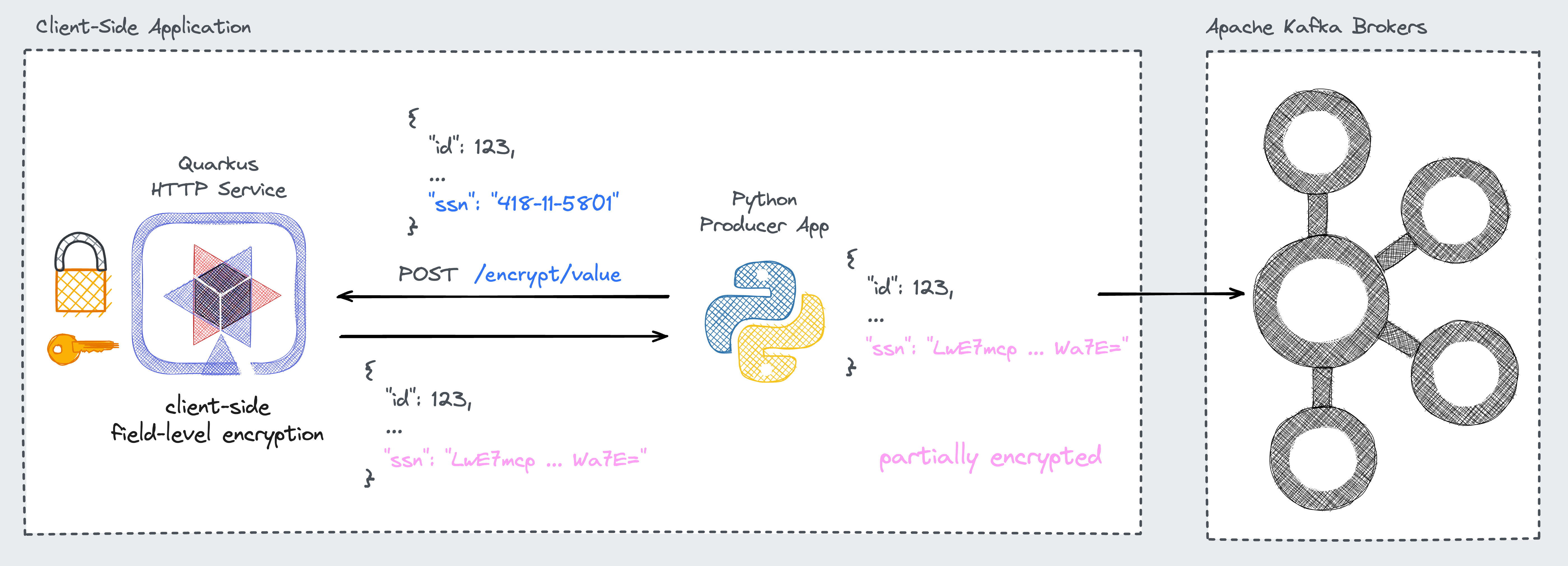

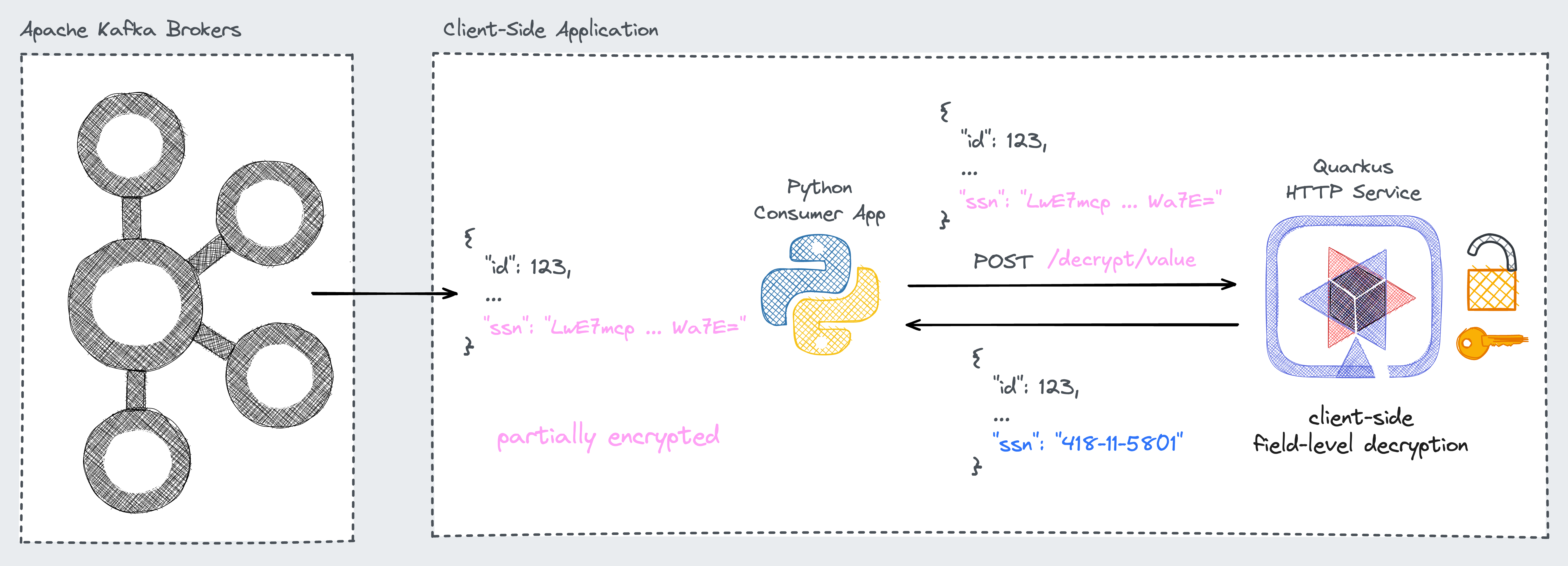

Quarkus HTTP Service

Field-Level Encryption with HTTP API

Field-Level Decryption with HTTP API

A lightweight HTTP service that exposes a web API with dedicated encryption and decryption endpoints. This enables applications written in any language to take part in end-to-end encryption scenarios.

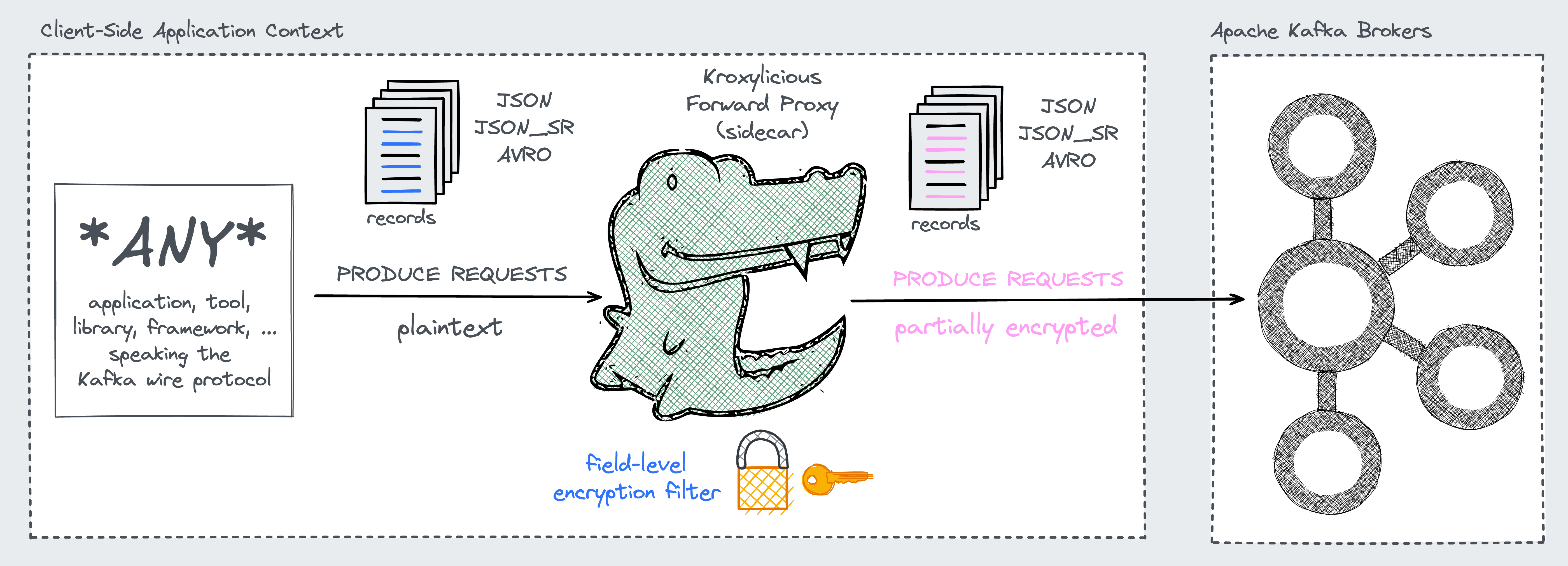

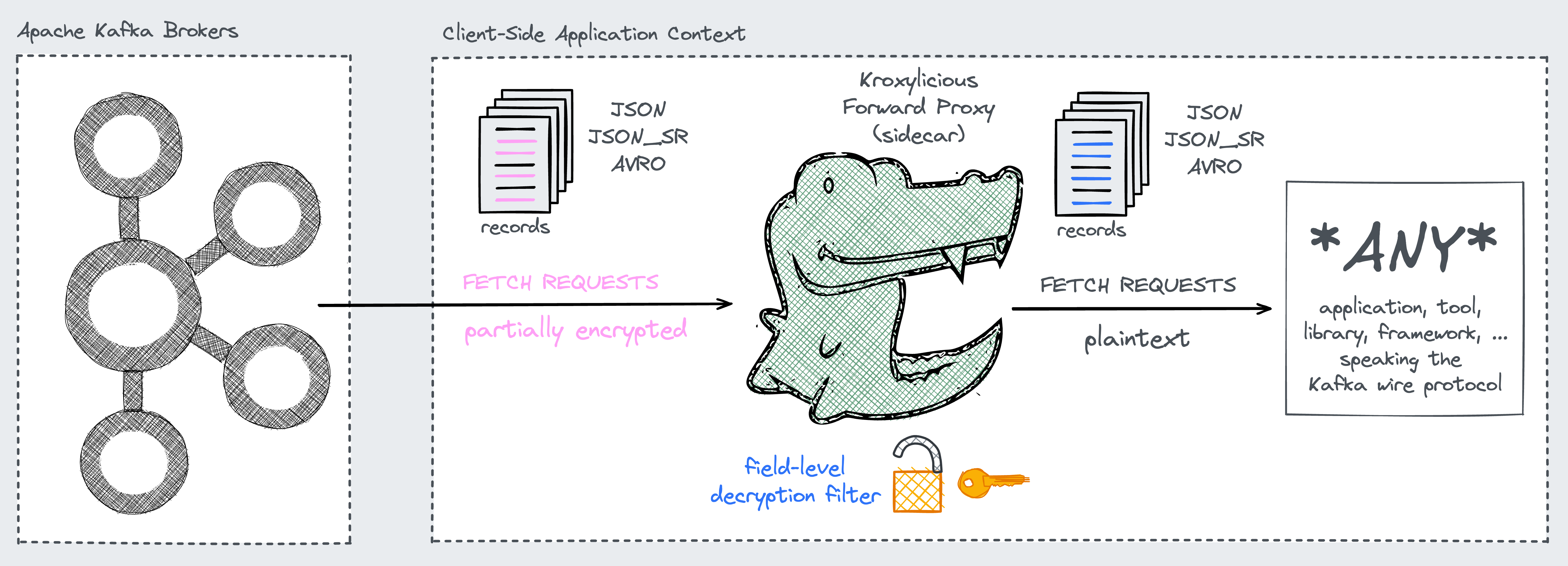

Kroxylicious Proxy Filter

Field-Level Encryption with Proxy Filter

Field-Level Decryption with Proxy Filter

The Kryptonite proxy filter provides transparent, client-agnostic field-level encryption and decryption for Apache Kafka. It runs as a pair of filters inside the Kroxylicious proxy — no changes to producers, consumers, or Kafka brokers are required.

End-to-End Scenario featuring Module Integrations for Apache Kafka Connect and Apache Flink

Supported Encryption Algorithms & Modes

| Algorithm | Mode | Input | Output | Usage |

|---|---|---|---|---|

TINK/AES_GCM |

AEAD probabilistic | any supported data type | string (Base64) | most cases unless envelope encryption is required (default) |

TINK/AES_GCM_ENVELOPE_KEYSET |

AEAD probabilistic (envelope encryption) |

any supported data type | string (Base64) | when you need envelope encryption but want local DEK wrap/unwrap using Tink keysets |

TINK/AES_GCM_ENVELOPE_KMS |

AEAD probabilistic (envelope encryption) |

any supported data type | string (Base64) | when you need envelope encryption and must use a remote KEK for DEK wrap/unwrap |

TINK/AES_GCM_SIV |

AEAD deterministic | any supported data type | string (Base64) | equality match, join operations, or aggregations on encrypted data |

CUSTOM/MYSTO_FPE_FF3_1 |

format-preserving encryption | string (specific alphabet) | string (same alphabet as input) | when the output must preserve a specific alphabet (e.g. SSNs, IBANs, ...) |

Available Key Management Options

| Key Source | Keyset Storage | Keyset Encryption | Security | Recommended for ... |

|---|---|---|---|---|

CONFIG |

inline as part of configuration | None | lowest | Local Development & Testing or Demos |

CONFIG_ENCRYPTED |

inline as part of configuration | Cloud KMS wrapping key | reasonable | Production (without centralised management) |

KMS |

cloud secret manager | None | reasonable | Production (with centralised management) |

KMS_ENCRYPTED |

cloud secret manager | Cloud KMS wrapping key | highest | Production (with centralised management) |

NONE |

no Tink keysets required | not applicable | highest | Production (with exclusive envelope encryption) |

Key Management Details | Cloud KMS Overview

Community Project Disclaimer

Kryptonite for Kafka is an independent community project. It's neither affiliated with the ASF and the respective upstream projects nor is it an official product of any company related to the addressed technologies.

Apache License 2.0 · Copyright © 2021–present Hans-Peter Grahsl.